The mechanisms that a Trade Logic system would need

Previously

I introduced and specified Trade Logic, and posted a fair bit about it.

Now

Here's another post that's mostly a bullet-point list. In this post I try to mostly nail down all the mechanisms TL needs in order to function as intended. Some of it, notably the treatment of selector bids, is still only partly defined.

The mechanisms that a TL system needs

-

Pre-market

-

Means to add definitions (understood to include selectors and

bettable issues)

- Initiated by a user

- A definition is only accepted if it completely satisfies the typechecks

- Definitions that are accepted persist.

-

Means to add definitions (understood to include selectors and

bettable issues)

-

In active market

-

Holdings

- A user's holdings are persistent except as modified by the other mechanisms here.

-

Trading

- Initiated by a user

-

User specifies:

- What to trade

- Buy or sell

- Price

- How much

- How long the order is to persist.

-

A trade is accepted just if:

- The user holds that amount or more of what he's selling.

- It can be met from booked orders

- It is to be retained as a booked order

-

Booked orders persist until either:

- Acted on

- Cancelled

- Timed out

-

Issue conversion

- Initiated by a user

-

User specifies:

- What to convert from

- What to convert to

- How much

-

A trade is accepted just if:

- It's licensed by one of the conversion rules

- User has sufficient holdings to convert from

-

Holdings

-

Selectors - where the system meets the outside world. Largely TBD.

-

As part of each selector issue, there is a defined mechanism that

can be invoked.

-

Its actions when invoked are to:

-

Present itself to the outside world as invoked.

- Also present its invocation parameters.

- But not reveal whether it was invoked for settlement (randomly) or as challenge (user-selected values)

-

Be expected to act in the outside world:

- Make a unique selection

- Further query that unique result. This implies that selector issues are parameterized on the query, but that's still TBD.

- Translate the result(s) of the query into language the system understands.

- Present itself to the system as completed.

-

Accept from the real world the result(s) of that query.

- How it is described to the system is TBD. Possibly the format it is described in is yet another parameter of a selector.

-

Present itself to the outside world as invoked.

- How an invocable mechanisms is described to the system is still to be decided.

- (NB, a selector's acceptability is checked by the challenge mechanism below. Presumably those selectors that are found viable will have been defined in such a way as to be randomly inspectable etc; that's beyond my scope in this spec)

-

Its actions when invoked are to:

-

Challenge mechanism to settle selector bets

-

Initiated by challengers

- (What's available to pro-selector users is TBD, but probably just uses the betting mechanism above)

-

Challenger specifies:

-

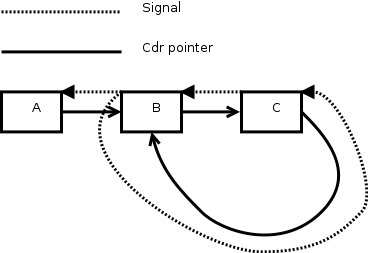

The value that the choice input stream should have. It is

basically a stream of bits, but:

-

Where

split-random-streamis encountered, it will split and the challenger will specify both branches. - Challenger can specify that from some point on, random bits are used.

-

Where

- Probably some form of good-faith bond or bet on the outcome

-

The value that the choice input stream should have. It is

basically a stream of bits, but:

- The selector is invoked with the given values.

- Some mechanism queries whether exactly one individual was returned (partly undecided).

- The challenge bet is settled accordingly.

-

Initiated by challengers

- There may possibly also be provision for multi-turn challenges ("I bet you can't specify X such that I can't specify f(X)") but that's to be decided.

-

As part of each selector issue, there is a defined mechanism that

can be invoked.

-

Settling (Usually post-market)

-

Public fair random-bit generating

- Initiated as selectors need it.

-

For selectors, some interface for retrieving bits just as

needed, so that we may use

pick01etc without physically requiring infinite bits.- This interface should not reveal whether it's providing random bits or bits given in a challenge.

-

Mechanism to invoke selectors wrt a given bet:

-

It decides:

- Whether to settle it at the current time.

- If applicable, "how much" to settle it, for statistical bets that admit partial settlement

-

Probably a function of:

- The cost of invoking its selectors

- Random input

- An ancillary market judging the value of settlement

- Logic that relates the settlement of convertible bets. (To be decided, probably something like shares of the ancillary markets of convertible bets being in turn convertible)

- Being activated, it in fact invokes particular selectors with fresh random values, querying them.

-

In accordance with the results of the query:

- Shares of one side of the bet are converted to units

- Shares of the other side are converted to zeros.

-

But there will also be allowance for partial results:

- Partial settlement

- Sliding payoffs

-

It decides:

-

Public fair random-bit generating